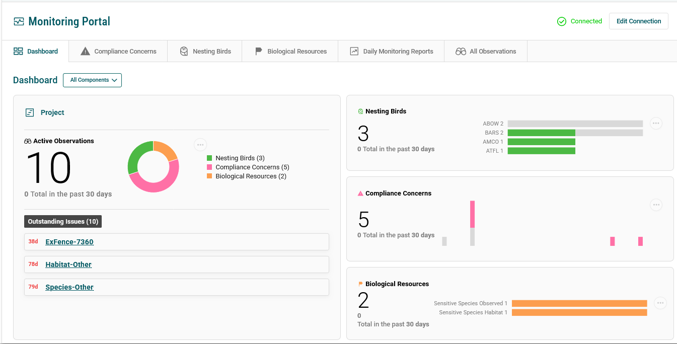

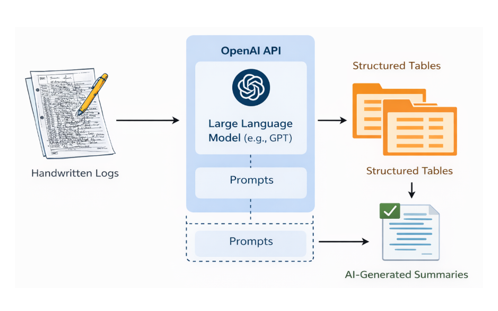

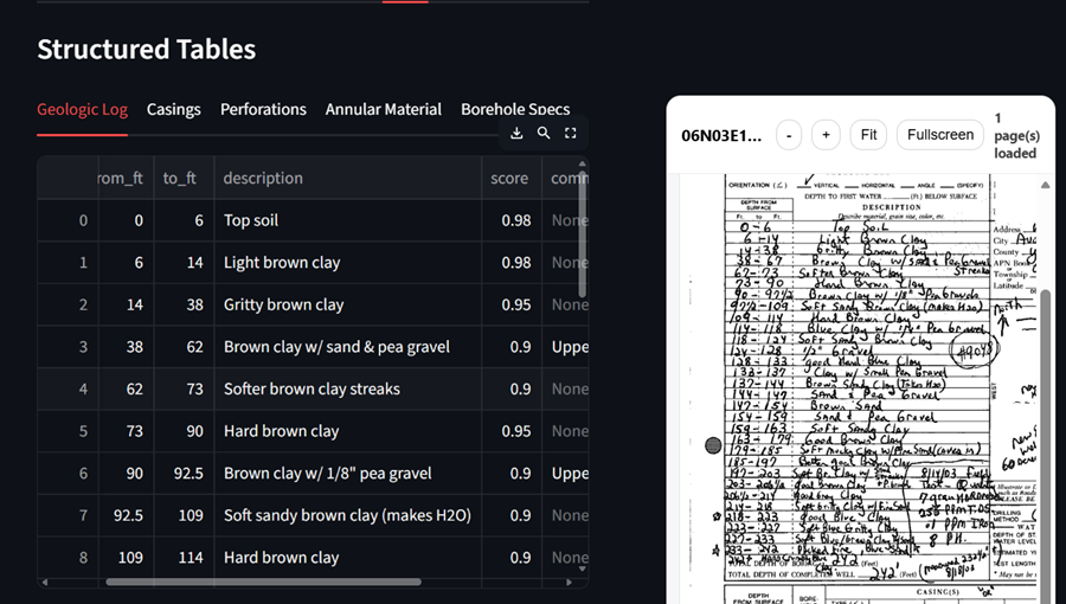

AI Strategy & Advisory Needs

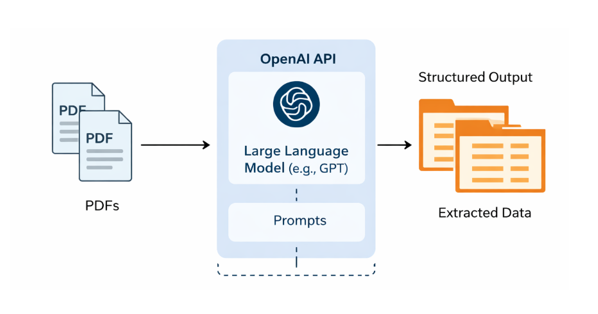

Artificial intelligence (AI) is reshaping how organizations operate at an unprecedented pace. We help you understand where you are in your AI journey, identify high-impact opportunities and constraints, and develop a clear, actionable roadmap to put AI to work.